Together with connected car technology and new energy vehicles, advanced driver assistance systems (ADAS) and autonomous vehicle systems have moved to the forefront of innovation in the automobile industry. Research, development, and production activities are significantly increasing due to demand for greater traffic safety, enhanced passenger and driver comfort, and ultimately for improved driving efficiency. Over 90% of the innovation in these areas is directly related to electronics, semiconductors, and the increasingly sophisticated software solutions enabled by the electronics and processing capability. With so many options becoming available for these computing architectures, it’s important that the industry establish a standard that will allow system designers to select the appropriate hardware and software components.

Ever since the first successful autonomous vehicle experiments in the late 1980s in both the U.S. [1] and Germany [2], engineers have been focusing their efforts on improving the perception and cognition of self-driving vehicles. Early work at Carnegie-Mellon focused primarily on vision and computing-platforms, making use of TV cameras, early versions of LiDAR, sonar, and several state-of-the-art on-board computers [3]. By 1989, these researchers were experimenting with rudimentary neural networks and artificial intelligence for directional control in response to the sensor inputs [4].

Today, nearly thirty years since these pioneering efforts, the basic principles of autonomous driving research remain largely the same: perception, cognition, and control. These core activities are often described as “sensing,” “thinking,” and “acting.” Sensing still depends heavily on vision and LiDAR, though Radar has become an important additional component. There is now some debate in the industry over if it will be possible to replace LiDAR entirely with a lower-cost multi-camera multi-Radar system or alternatively if the expected cost reductions in LiDAR that will come about from the introduction of solid-state technology, and the associated improved aesthetics of not having the radar sensors embedded in the front of the car, will make cameras and Radar systems less important. Vehicle-to-Vehicle and Vehicle-to-Infrastructure communication (together, V2X) can provide additional sensory input to the self-driving vehicle. The thinking and acting functions are also continuing to develop, requiring ever more powerful state-of-the-art processing capability (typically massively parallel processors) evolving towards machine learning and artificial intelligence.

In order to better understand the requirements for ADAS and autonomous vehicle processing systems, a distinction must first be made between the different levels of autonomous driving [5]. While the distinction between the levels is sometimes fuzzy, the levels themselves have been standardized by the Society of Automotive Engineering (SAE). Each subsequent level requires exponentially increasing compute performance, an increasing number of sensors, as well as an increasing level of legal, regulatory, and even philosophical approvals.

- Level-0: These driver assistance systems have no vehicle control, but typically issue warnings to the Examples of Level-0 systems include blind-spot detection or simple Lane Keeping Assistance (LKA) systems that don’t take control of the vehicle. These systems require reasonably sophisticated sensor technology and enough processing capability to filter out some false positives (alerting the driver to a hazard that is not real). However, since the ultimate vehicle control remains with the driver, the processing demands are not as great as with the higher levels of autonomy.

- Level-1: The driver is essentially in control of the vehicle at all times, but the vehicle is able to temporarily take control from the driver in order to prevent an accident or to provide some form of Level-1 automated system may include features such as Adaptive Cruise Control (ACC), Automatic Electronic Braking (AEB), Parking Assistance with automated steering, and LKA systems that will actively keep the vehicle in the lane unless the driver is purposely changing lanes. Level-1 is the most popular form of what can reasonably be considered “self-driving technology” in production today. Since Level-1 does not control the vehicle under normal circumstances, it requires the least amount of processing power of the autonomous systems. With Level-1 systems and above, the avoidance of false-positives becomes an important consideration in the overall system design. For example, the sudden erroneous application of the brakes by a vehicle on the freeway when the car does not need to slow down or stop could actually introduce a far greater safety hazard than the one it was meant to avoid. One of the techniques being deployed to help avoid false sensor readings is the use of sensor fusion – fusing inputs from various disparate sensors like camera and radar to stitch together a more accurate picture of the vehicle’s environment.

- Level-2: The driver is obliged to remain vigilant in order to detect objects and events and respond if the automated system fails to do so However, under a reasonably well-defined set of circumstances, the autonomous driving systems execute all of the accelerating, braking, and steering activities. In the event that a driver feels the situation is becoming unsafe, they are expected to take control of the vehicle and the automated system will deactivate immediately. Tesla’s autopilot is a well-known example of Level- 2 autonomy, as was the ill-fated comma-one by comma-a.i. [6].

- Level-3: Within known, limited environments, such as freeways or closed campuses, drivers can safely turn their attention away from the driving task and start reasonably doing other Nonetheless, they must still be prepared to take control when needed. In many respects Level-3 is believed to be one of the more difficult levels to manage, at least from the perspective of human psychology. When a Level-3 system detects that it will need to revert control of the vehicle back to the driver, it must first be able to capture the driver’s attention and then affect a smooth hand-over before relinquishing control. This is a remarkably difficult thing to do and could take as long as ten seconds before a driver who was engrossed in some non-driving related task is fully ready to take over. Many, though not all, automakers have announced plans to skip Level-3 entirely as they advance their autonomous driving capabilities.

- Level-4: The automated system can control the vehicle in all but a few conditions or environments such as severe weather or locations that have not been sufficiently The driver must enable the automated system only when it is safe to do so. When enabled, driver attention is not required, making Level-4 the first level that can genuinely be called fully autonomous in the sense that a driver or passenger would have a reasonable expectation of being able to travel from one destination to another on a variety of public and private roads without ever having to assume control of the vehicle. This is the level of autonomy that most automakers, and companies like Waymo, are working towards as a first step when they are making claims of having fully autonomous cars on the road in the next three to five years. The sensing, thinking, and control functions must all be extremely powerful and sophisticated in a fully functional Level-4 vehicle, though to some extent, the ability to “fence off” particularly difficult roads or prohibit operation in severe weather allows for some limitations in the system’s sensing and learning capability. At least some of the Level-4 vehicles in development will likely be produced without any driver control capabilities at all.

- Level-5: Other than setting the destination and starting the system, no human intervention is required; the automatic system can drive to any location where it is legal to drive and make its own decisions. True Level-5 autonomous driving is still a number of years out as these systems will require extremely robust systems for every activity: sensing, thinking learning, and acting. The perception functions must avoid false positives and false negatives in all weather conditions and on any roads, whether those roads have been properly mapped out in advance or not. Most likely Level-5 vehicles will not have any driver controls.

While a future in which self-driving vehicles are ubiquitous may still be many years away, there are already hundreds of companies deploying thousands of engineers to develop ADAS and autonomous vehicle systems across all levels of autonomy. Existing production ADAS systems and autonomous systems already rely on sophisticated sensing in order to accurately perceive the vehicle’s environment, and on powerful real-time processing. In addition, because these systems are handling so much data, automakers have started transitioning from traditional In-Vehicle-Networking architectures like CAN to higher bandwidth protocols like Ethernet for transporting the signals and data around the vehicle. This situation becomes magnified as sensor fusion becomes the norm and sensor input data must be routed to a central processing unit, in order to comprehensively analyze the vehicle’s position and surroundings. Of course, this will place even more demand on the system’s processing capabilities and makes the decision on which processing platform to use all the more important.

Comparing Compute Platforms for ADAS and Autonomous Systems

Every ADAS system, and therefore every level of autonomous driving, will require some form of complex embedded computing architecture. These architectures could contain a variety of CPU cores, GPUs, and specialized hardware accelerators. An example of such a computing platform is NVIDIA’s multi-chip solution, DRIVE PX 2, which includes two mobile processors and two discrete GPUs. Contrast this to NXP’s BlueBox which incorporates an automotive vision/sensor fusion processor and an embedded compute cluster (eight ARM processor cores, an image processing engine, a 3D GPU, etc.). Both companies claim that their devices have the capability for full autonomous driving. How can an ADAS or autonomous driving system engineer compare these two platforms, as well as platforms from Analog Devices, Qualcomm, Intel, Mobileye, Renesas, Samsung, Texas Instruments, or other semiconductor suppliers?

Ultimately the production computing platform must be selected based on factors that include compute performance, energy consumption, price, software compliance, and portability (such as the application programmer interface or API). Identifying the potential compute performance and energy consumption of a heterogeneous embedded architecture is an overwhelming and inaccurate task with the currently available benchmarks, as they focus either on monolithic application use cases, or on isolated compute operations. Real world scenarios on these architectures require an optimal utilization of the available compute resources, in order to accurately reflect the application use case. This often implies the proper balancing of the compute tasks across multiple compute devices and separate fine tuning for their individual performance profiles, which in turn requires intimate knowledge of the architectures of individual compute devices, and of the heterogeneous architecture as a whole. To support this effort, key semiconductor vendors and automotive OEMs are working with EEMBC to develop a performance benchmark suite that both assists in identifying the performance criteria of the compute architectures, and in determining the true potential of the architectures for various levels of autonomous driving platforms. EEMBC is an industry association focused on developing benchmarks for various embedded applications, including ADAS.

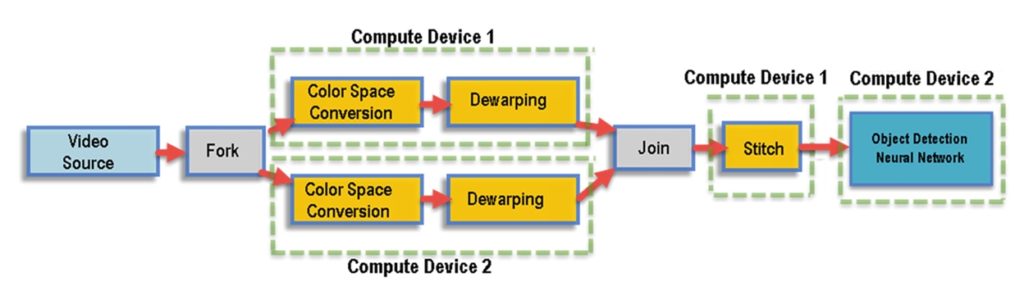

ADAS is highly reliant on vision processing, and a typical application use case involves multiple camera images that are normalized and stitched into a seamless surround view, and certain objects of interest (e.g., pedestrians, road signs, obstacles, etc.) are detected in the resulting images. The processing of this information is done in a pipeline flow comprised of discrete elements. Hence, the overall goal of this compute benchmark is to measure the complete application flow from end-to end as well as the discrete elements (using micro-benchmarks). This allows the user to comprehend the performance of the system as a whole, and supports the benchmark being used as a detailed performance analysis tool for SoCs and optimizing the discrete elements (Figure 1).

Figure 1: Simplified example of ADAS processing flow with discrete pipeline elements distributed among various compute devices. Video stream input splits into two parallel sections for color space conversion (Bayer) and de-warping (to remove distortion); the Join stage stitches the 2D polygons back together, creating a tops-down view. The stitching stage is followed by a neural network that performs object detection. In a complete ADAS application this output would then be used to make decisions on which the vehicle would act.

Optimizations in a Benchmark?

One of the key points for a real-world, ADAS benchmark is to enable the user to determine the true potential of the compute architecture, instead of relying on benchmark numbers from software that will typically be biased towards the reference platform it was developed on. This true potential discovery is only possible if the code is optimized for the given architecture; hence, vendors can implement manual optimizations using a common interface that demonstrates their processor’s baseline performance. Optimizations following the standard API will be verified by EEMBC, by code inspection, and comparative testing with a random generated input sequence. If users want to utilize proprietary extensions, it becomes an unverified custom version, but will ultimately demonstrate the full potential of the intended architecture. Even following the standard benchmarking methodology, there are still many possible combinations to distribute the micro-benchmarks across compute devices. Ultimately, the benchmark will serve as an analytical tool that automatically tracks the various combinations and allows the user to make tradeoffs for performance, power, and system resources. In other words, for devices that are power constrained, highest performance is not always optimal. Each compute device in an ADAS architecture has a certain power profile, and by adding that information to the optimization algorithm, it can be adjusted to find the best performance at a defined power profile.

The Tangible Intangibles

Besides the benchmark performance comparisons addressed above, automakers and system engineers must also consider a number of soft factors when selecting computing platforms for ADAS and autonomous driving development, such as relationship, roadmap alignment, and the ability to enable a broader software ecosystem. For example, BMW has recently announced a partnership with Intel and MobilEye. Likewise, Audi, and Tesla have both established partnerships with NVIDIA. These tie-ups may give certain players advantages over others, even in the event that benchmarking performance is comparable. In addition, while some automakers are developing their own in-house software capability, many of the players in the rapidly evolving autonomous driving software ecosystem are start-ups. These new companies are developing everything from driving-control applications to abstraction layers between the hardware and the application layers. A few are even developing complete vehicle operating systems. While most software startups attempt to remain hardware-agnostic, at least in theory, the reality is that many only have the resources to develop their systems on one or two hardware platforms. Once the vehicle system engineers at the automaker or a major Tier-1 supplier chooses a particular computing platform, they are in effect pre-defining the software ecosystem to which they will potentially have access.

Another important consideration in the selection of the computing architecture is the overall automotive capability of the processor supplier. Certainly quality and device reliability are parts of this equation. While qualifying ICs to AEC Q100 is no longer the barrier to entry it used to be, ADAS and autonomous driving are safety-critical applications and, as such, compliance to functional safety standards (ISO26262) is an important criterion when selecting vendors for production programs. But automotive capability goes beyond quality. Supply chain reliability should be equally important when evaluating systems intended for production vehicle programs. This means not only understanding where parts are manufactured, but how much control the supplier has over those manufacturing locations, how quickly can alternate sources be brought online, and how much priority will you be afforded in the event of a supply chain disruption.

Many people in the automobile industry have experienced business disruptions resulting from such natural disasters as floods, earthquakes, and fires. No supplier is 100% immune from such forces majeures but the truly automotive capable suppliers understand how to deal with these situations and have risk mitigation plans in place to protect their automotive customers.

No Right Answer but There is a Right Process

Clearly there is no one right answer to the question: “what is the best compute architecture for ADAS and autonomous driving?” The right processing platform will very much depend on a number of system-specific technical requirements centered on processing power and energy consumption. Having the best possible benchmark is an ideal place to start the technical part of the evaluation. But beyond the benchmark, it is critical that system engineers also have a longer-term view to the broader ecosystem they wish to enable and to the enterprise level capabilities of the suppliers under evaluation. In this way, system engineers can transition rapidly and smoothly from being at the forefront of automotive innovation to being at the very core of tomorrow’s production vehicles.

References

[1] The Defense Advanced Research Projects Agency funded Autonomous Land Vehicle project at Carnegie-Mellon University.

[2] Mercedes-Benz together with the Bundeswehr University Munich.

[3] Kanade, T. & Thorpe, C. (1986). CMU strategic computing vision project report: 1984 – 1985. Retrieved from http://repository.cmu.edu/robotics

[4] Pomerleau, D.A. (1989). ALVINN, an autonomous land vehicle in a neural network. Retrieved from http://repository.cmu.edu/robotics

[5] https://en.wikipedia.org/wiki/Autonomous_car#Classification

[6] Abuelsamid, S. (2016). Lessons From The Failure Of George Hotz And The Comma One Semi-Autonomous Driving System. Forbes. http://www.forbes.com/sites/samabuelsamid/2016/11/01/thoughts-on-george-hotz-and-the-death-of-the-comma-one/2/ – 1bd957250ec1

Featured image courtesy of NXP Semiconductors.

About the Authors

Markus Levy is president of EEMBC, which he founded in April 1997. As president, he manages the business, marketing, press relations, member logistics, and supervision of technical development. Mr. Levy is also president of the Multicore Association, which he co- founded in 2005. In addition, Mr. Levy chairs the IoT Developers Conference and Machine Learning DevCon. He was previously founder and chairman of the Multicore Developers Conference, a senior analyst at In-Stat/MDR, and an editor at EDN magazine, focusing in both roles on processors for the embedded industry. Mr. Levy began his career in the semiconductor industry at Intel Corporation, where he served as both a senior applications engineer and customer training specialist for Intel’s microprocessor and flash memory products. He is the co-author of Designing with Flash Memory, the one and only technical book on this subject, and received several patents while at Intel for his ideas related to flash memory architecture and usage as a disk drive alternative.

Markus Levy is president of EEMBC, which he founded in April 1997. As president, he manages the business, marketing, press relations, member logistics, and supervision of technical development. Mr. Levy is also president of the Multicore Association, which he co- founded in 2005. In addition, Mr. Levy chairs the IoT Developers Conference and Machine Learning DevCon. He was previously founder and chairman of the Multicore Developers Conference, a senior analyst at In-Stat/MDR, and an editor at EDN magazine, focusing in both roles on processors for the embedded industry. Mr. Levy began his career in the semiconductor industry at Intel Corporation, where he served as both a senior applications engineer and customer training specialist for Intel’s microprocessor and flash memory products. He is the co-author of Designing with Flash Memory, the one and only technical book on this subject, and received several patents while at Intel for his ideas related to flash memory architecture and usage as a disk drive alternative.

Drue Freeman is a 30-year semiconductor veteran. He advises and consults for technology companies ranging from early-stage start-ups to multi-billion dollar corporations and for financial institutions investigating the implications of the technological disruptions impacting the automotive industry. Previously, Mr. Freeman was Sr. Vice President of Global Automotive Sales & Marketing for NXP Semiconductors. He has participated in numerous expert panels and spoken at various conferences on Intelligent Transportation Systems. Mr. Freeman helped found and served on the Board of Directors for Datang-NXP Semiconductors, the first Chinese automotive semiconductor company, and spent four years as VP of Automotive Quality at NXP in Germany. He is an Advisory Board Member of BWG Strategy LLC, an invite-only network for senior executives across technology, media and telecom, and a member of Sand Hill Angels, a group of successful Silicon Valley executives and accredited investors that are passionate about entrepreneurialism and the commercialization of disruptive technologies.

Drue Freeman is a 30-year semiconductor veteran. He advises and consults for technology companies ranging from early-stage start-ups to multi-billion dollar corporations and for financial institutions investigating the implications of the technological disruptions impacting the automotive industry. Previously, Mr. Freeman was Sr. Vice President of Global Automotive Sales & Marketing for NXP Semiconductors. He has participated in numerous expert panels and spoken at various conferences on Intelligent Transportation Systems. Mr. Freeman helped found and served on the Board of Directors for Datang-NXP Semiconductors, the first Chinese automotive semiconductor company, and spent four years as VP of Automotive Quality at NXP in Germany. He is an Advisory Board Member of BWG Strategy LLC, an invite-only network for senior executives across technology, media and telecom, and a member of Sand Hill Angels, a group of successful Silicon Valley executives and accredited investors that are passionate about entrepreneurialism and the commercialization of disruptive technologies.

Rafal Malewski leads the Graphics Technology Engineering Center at NXP Semiconductors; a GPU centric group focused on graphics, compute and vision processing for the i.MX microprocessor family. With over 16 years of experience in embedded graphics and multimedia development, he spans the full vertical across GPU hardware architecture, drivers, middleware and application render/processing models. Mr. Malewski is also the EEMBC Chair for the Heterogeneous Compute Benchmark working group.

Rafal Malewski leads the Graphics Technology Engineering Center at NXP Semiconductors; a GPU centric group focused on graphics, compute and vision processing for the i.MX microprocessor family. With over 16 years of experience in embedded graphics and multimedia development, he spans the full vertical across GPU hardware architecture, drivers, middleware and application render/processing models. Mr. Malewski is also the EEMBC Chair for the Heterogeneous Compute Benchmark working group.